This article is part of a series that provides practical advice and guidance on how to leverage the Continuous Architecture approach. All these articles are available on our “Continuous Architecture in Practice” blog. Here we will present some additional thoughts about implementing the “Minimum Viable Architecture” concept that we discussed in our previous article[1] and will illustrate those thoughts with a fictional example.

“Minimum Viable Architecture” Has Become a Viable Concept

In pre-cloud days, the need for upfront architecture efforts was often driven by long lead times in provisioning infrastructure. Software architects and engineers needed to plan to make sure that the infrastructure they needed would be available in all the environments where their software system would be running (such as development, system test, production, etc…). With the advent of cloud (especially vendor clouds) and the concept of infrastructure on-demand, this limitation has gone away, and provisioning infrastructure is no longer in the critical path of delivering software systems. As a result, using a Minimum Viable Architecture (MVA) strategy to effectively bring a software product to market faster with a better return on investment has become a more viable approach. But what exactly do we mean by “Minimum Viable Architecture”? As we mentioned in our previous article, a minimum viable architecture is usually created by software development teams who adopt the following approach:

- They develop initially just enough architecture to exactly meet the known quality attribute requirements of a software system to quickly create a system viable enough to be used in production.

- Then they continuously augment the initial software system to meet additional requirements or requirement changes as they are defined over time. Keeping the architecture flexible is essential, and leveraging CA Principle 3 (“Delay design decisions until they are absolutely necessary”) is an effective way to accomplish this objective.

This brief overview of the MVA approach is rather conceptual, so let us walk through an example to make this more actionable, and show how the MVA approach can be used to develop a chatbot. This has five steps. In this article, we will walk through the first three steps. In the second article, we will cover steps 4 and 5 and conclude with some additional thoughts on the definition and value of an MVA.

Implementing a chatbot using the MVA approach

What exactly is a chatbot? It can be defined as a software service that can act as a substitute for a live human agent, by providing an online chat conversation via text or text-to-speech[2].

This is a good candidate for inclusion in the scope of many software systems, such as trade finance systems. “Trade finance” is the term used for the financial instruments and products that are used by companies to facilitate global trade and commerce. Trade finance specialists in financial institutions often receive a high volume of queries by telephone calls and emails from their customers and spend valuable time researching and answering questions. Automatically handling a portion of those queries by using a chatbot would make them more productive.

Step 1 – Initial MVA: a simple menu-driven chatbot

Our first step in the MVA approach is to apply CA principle 1 (“Architect Products – Not Just Solutions for Projects”) and create an architectural design for the implementation of a Minimum Viable Product (MVP). In our example, the MVP is a simple chatbot that can be extended as the need arises. The goal at this stage is to avoid incorporating too many requirements, both functional requirements, and quality attribute requirements, in the initial design, depicted below. Our MVP is not a throwaway product – we will add capabilities to it and incrementally build its architecture in steps 2 to 5.

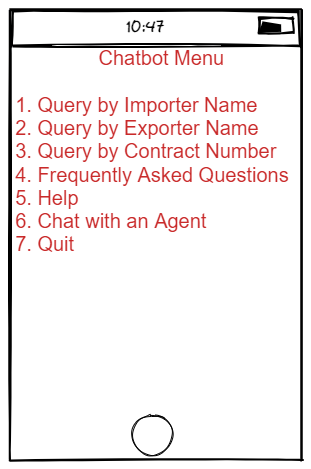

An open-source, reusable framework[3] can be used to implement a range of customer service chatbots, from a simple menu-based to more advanced ones that use Natural Language Understanding (NLU). Using this framework, the first MVA design supports the implementation of a menu-based single-purpose chatbot capable of handling straightforward queries. This simple chatbot presents a simple list of choices to its users, on smartphones, tablets, laptops, or desktop computers:

We need to keep in mind CA Principle 3 (“Focus on Quality Attributes – not on Functional Requirements”) when creating the architectural design for the MVP. There are few quality attribute requirements at this time and no concerns with performance or scalability since the MVP deployment will be limited to a small user base. However, security requirements need to be taken into account. For menu options 1, 2, and 3, a user should be authorized to access the information that the chatbot is retrieving, so the chatbot should capture user credentials and pass those credentials to the backend services for access validation. In addition, we need to remember CA Principle 5 (“Architect for Build, Test and Deploy”) and include simple monitoring capability in the initial design to monitor performance and gather information about any potential system issue.

Pilot users are pleased with the capabilities of the MVP but are also concerned with the limitations of a simple menu-based user interface. As more capabilities are added to the chatbot, the menu-based interface becomes cumbersome to use. It displays too many options and becomes too large to be shown entirely on a smartphone screen or in a small popup window. In addition, pilot users would like to jump from one menu option to another one without having to return to the main menu and navigate through the sub-menus. They would like to converse with the chatbot more familiarly, using natural language.

Step 2 – Next MVA iteration: Implementing a Natural Language Interface

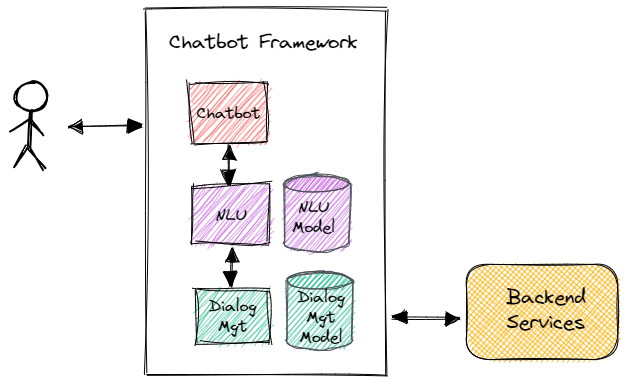

The open-source chatbot framework used for the MVP implementation includes support for Natural Language Understanding (NLU), so it makes sense to continue using this framework to add NLU to the capabilities of the chatbot. Using NLU transforms the simple chatbot into a machine learning (ML) application.

Specifically, the team needs to update the architecture of the chatbot, as shown in the diagram below. Data ingestion and data preparation in the off-line mode for training data are two architecturally important steps, as well as model deployment and model performance monitoring, in accordance with CA Principle 5. Model monitoring for language recognition accuracy as well as throughput and latency is especially important. Business users use certain Trade Finance terms which the chatbot gets better at understanding over time.

The new architecture includes two models that need to be trained in a sandbox environment and deployed to a set of IT environments for eventual use in production. Those models include an NLU model, used by the chatbot to understand what users want to do, and a Dialog Management model used to build the dialogues so that the chatbot can satisfactorily respond to the messages. Both the models and the data they use should be treated as first-class development artifacts that are versioned.

Step 3 – Improving Performance and Scalability

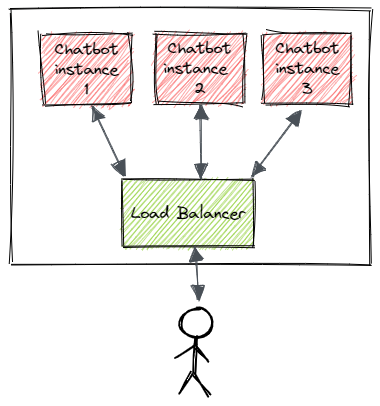

The implementation of the natural language interface is successful and as a result, the initial user base is significantly expanded. Unfortunately, data gathered by the monitoring capability indicates that the performance of the chatbot degrades rapidly as the number of active users increases.

CA Principle 3 directs us to look for a simple solution to this issue, and avoid making drastic design decisions such as significantly refactoring the application or switching to another chatbot framework unless there is no alternative. A simple, less costly option would be to deploy multiple instances of the chatbot, and to distribute the user requests as equally as possible between the instances, as depicted in the following diagram:

This architectural option includes a load balancer that ensures that user requests are equally distributed between the various chatbot instances. It also uses a “sticky session” concept, meaning that session data is kept on the same server for the duration of the session, to minimize session latency. Those changes are successful, and the chatbot can now handle its expanded user base without performance issues.

However, new important capabilities are being requested by the stakeholders, and as a result, the chatbot architecture needs to evolve to meet these new requirements. The steps in this evolution are discussed in the next article in this series.

To Be Continued….

The second and final article in this “Minimum Viable Architecture In Practice” series covers steps 4 and 5 of this chatbot development process, so we hope that you find those articles useful and you will keep on reading them.

[1] Minimum Viable Architecture: How To Continuously Evolve an Architectural Design over Time

https://continuousarchitecture.com/2021/12/21/minimum-viable-architecture-how-to-continuously-evolve-an-architectural-design-over-time/

[2] See Wikipedia < https://en.wikipedia.org/wiki/Chatbot>

[3] RASA would be an example of such framework. “Rasa Open Source Machine Learning Framework”, https://rasa.com

[…] [1] Minimum Viable Architecture In Practice (Part 1)https://continuousarchitecture.com/2022/02/14/minimum-viable-architecture-in-practice-part-1/ […]

LikeLike