This article is part of a series that provides practical advice and guidance on how to leverage the Continuous Architecture approach. All these articles, including the first article on performance, are available on the “Continuous Architecture in Practice” website at https://continuousarchitecture.com/blog/. “Performance Part 1” provides a definition of performance, discussing its importance, its relationship with other quality attributes and the architectural forces affecting it. Part 2 discusses the performance impacts of emerging trends and how to architect applications around performance modeling and testing. Part 3 is the third and final article in this series and discusses the architectural tactics available to address performance requirements. Please note that we are just presenting a summary of those topics here, due to space limitations. A full discussion, including a comprehensive list of resources for further reading, is included in Chapter 6 of our “Continuous Architecture in Practice” book.

Modern Application Performance Tactics

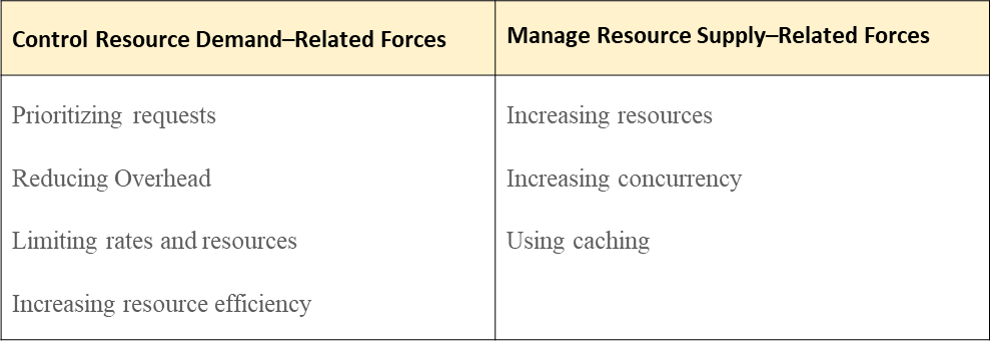

The following table lists some of the application architecture tactics that can be used by software architects and engineers to enhance the performance of a software system[1]:

Tactics to Control Resource Demand–Related Forces

The first set of application performance tactics affects the demand-related forces in order to generate less demand for the resources needed to handle requests.

- Prioritizing Requests is an effective way to ensure that the performance of high-priority requests remains acceptable when the request volumes increase, at the expense of the performance of low-priority requests. It is not always feasible, as it depends on how the system functions. One implementation option is to use a synchronous communication model for high-priority requests, while lower-priority requests could be implemented using an asynchronous model with a queuing system.

- Reducing Overhead: grouping some of the smaller services into larger services may improve performance, as designs that use a lot of smaller services for modifiability could experience performance issues. Calls between service instances create overhead and may be costly in terms of overall execution time.

- Limiting Rates and Resources: Resource governors and rate limiters, such as the SQL Server Resource Governor and the MuleSoft Anypoint API Manager are commonly used with databases and APIs to manage workload and resource consumption and thereby improve performance. They ensure that a database or a service is not overloaded by massive requests by throttling those requests.

- Increasing Resource Efficiency: Improving the efficiency of the code used in critical services decreases latency. Unlike the other tactics, which are external to the utilized components, this tactic looks internally into a component. For example, this can be implemented with periodic code reviews and tests, focusing on the algorithms used and how these algorithms utilize CPU and memory.

Tactics to Manage Resource Supply–Related Forces

The second set of application performance tactics affect the supply-related forces in order to improve the efficiency and effectiveness of the resources needed to handle requests.

- Increasing Resources: Using more powerful infrastructure, such as more powerful servers or a faster network, may improve performance. However, this approach may be harder to implement in a commercial cloud, as the cloud provider may restrict infrastructure choices. Similarly, using additional service instances and database nodes may improve overall latency, assuming that the design of the system supports concurrency.

- Increasing Concurrency involves processing requests in parallel, using techniques such as threads, additional service instances, and additional database nodes. It is also about reducing blocked time (i.e., the time a request needs to wait until the resources it needs becomes available).

- Using Caching is a powerful tactic for solving performance issues, because it is a method of saving results of a query or calculation for later reuse. Applicable caching tactics include database object cache, application object cache and proxy cache, and are described in the “Scalability as an Architectural Tactic – Part 3” article (see https://continuousarchitecture.com/blog/)

These tactics are very general, and so universally applicable, but they need to be interpreted in the context of specific problems. One of the most common problems in many modern systems is database performance, so let us explore how these general tactics become more specific to address that are of system performance”.

Modern Database Performance Tactics

Traditional database performance tactics, such as materialized views, indexes, and data denormalization, have been used by database engineers for decades. New technologies, such as NoSQL database models, full-text searches, and MapReduce, have emerged more recently to address performance requirements in the Internet era.

Materialized Views

Materialized views can be considered as a type of precompute cache. A materialized view is a physical copy of a common set of data that is frequently requested and requires database functions (e.g., joins) that are input/output (I/O) intensive. All traditional SQL databases support materialized views.

Indexes

All databases utilize indexes to access data, which are instances of reducing overhead. Without an index, the only way a database can access the data is to look through each row (if we think traditional SQL) or data item. Indexes enable the database to avoid this by creating access mechanisms that are much more performant. However, indexes are not a panacea. Creating too many indexes adds additional complexity to the database and can have a significant impact on performance and storage space. Remember that each write operation must update the index and that indexes also take up space.

Data Denormalization

Data denormalization is another instance of reducing overhead. Whereas data normalization breaks up logical entity data into multiple tables, one per logical entity, data denormalization combines that data within the same table. When data is denormalized, it uses more storage and can introduce complexity to updating data, but it can improve query performance because queries retrieve data from fewer tables. It is a good performance tactic in implementations that involve logical entities with high query rates and low update rates. Many NoSQL databases use this tactic to optimize performance, using a table per-query data modeling pattern. This tactic is closely related to materialized views, discussed previously, as the table-per-query pattern creates a materialized view for each query.

NoSQL Databases

Traditional databases and their performance tactics reached their limits in the Internet era. This created the wide adoption of NoSQL databases, which address particular performance challenges and increase resource efficiency. NoSQL technology is a broad topic, and there are few generalizations that hold across the entire category. NoSQL technology has two motivations: reduce the mismatch between programming language data structures and persistence data structures and to increase the options available to address performance concerns for specific use cases. To select the correct NoSQL database technology that addresses a performance problem requires a clear understanding of read and write patterns. For example, wide-column databases perform well with high numbers of writes but struggle with complex query operations, while relational database excel with moderate levels of write and update activity combined with complex and unpredictable query operations. A database technology choice is an expensive decision to change for two main reasons. First, particularly with NoSQL databases, the architecture of the software system is influenced by the database technology. Second, any database change requires a data migration effort, which can be a challenging exercise.

Full-Text Search

Full-text searches are not a new requirement, but they have become more and more prevalent during the internet and ecommerce era. They are another instance of increasing resource efficiency. They involve searching documents in a full-text database, without using metadata or parts of the original texts, such as titles or abstracts, stored separately from documents in another database. Full-text search engines are becoming quite common and are often used with NoSQL document stores to provide additional capabilities. For example, integrations of full-text search with document databases and key–value stores is becoming more prevalent (e.g., ElasticSearch with MongoDB or DynamoDB).

MapReduce

Another common tactic for improving performance (particularly, throughput) is to parallelize activities that can be performed over large datasets, which is an instance of increasing concurrency. One of the best known approaches for this is MapReduce, which gained widespread adoption as part of the Hadoop ecosystem used for big data systems. Other distributed processing systems for big data workloads, such as Apache Spark, use programming models that are different from MapReduce but are solving fundamentally the same problem of dealing with large, unstructured datasets. The two key performance benefits of MapReduce are that it implements parallel processing by splitting calculations among multiple nodes, which process them simultaneously, and it collocates data and processing by distributing the processing steps to the nodes where the data needed for a particular processing step resides. There are, however, some performance concerns with MapReduce. Network latency can be an issue when a large number of nodes is used. In some cases, query execution time can often be orders of magnitude higher than with modern database management systems. A significant amount of execution time is spent in setup, including task initialization, scheduling, and coordination. Therefore, as with many big data implementations, the performance you are likely to achieve depends on the nature of the software system and is affected by design and implementation choices.

To Conclude

In this article we have seen that there are many time-proven performance tactics which are general enough to be relevant across a range of different technologies and environments, but each one needs to be interpreted and applied correctly in each specific situation, as we showed when we discussed how they applied to database performance tactics.

However, as useful as performance tactics are, we have a final word of caution about them: it is important to keep CA Principle 3: “Delay design decisions until they are absolutely necessary” in mind when implementingthem, andnot to over-engineera software system to address vague performance requirements, or (even worse) to use technologies promoted by current IT trends or fads.

The next few articles in this series will continue to focus on the practical aspects of using the Continuous Architecture approach, such as how to implement other critical quality attributes (e.g. Security and Resilience) and how to use architecture to deal with technical risk associated with emerging technologies. We hope that you find those articles interesting and you will keep on reading them!

For more information on our new book, “Continuous Architecture in Practice”, which discusses performance in much more detail, please visit our website at continuousarchitecture.com.

[1] These tactics are a subset of the performance tactics described in Bass, Clements, and Kazman’s Software Architecture in Practice